Building an AI SEO Framework Using Real Search Data

“An AI SEO framework built on real search data is a structured system for creating, optimising, and distributing content that ranks in both traditional search engines and AI-generated answers. It uses live query data, intent mapping, and authority signals — not keyword theory alone — to drive consistent, measurable organic growth.”

There's a version of AI SEO advice that circulates endlessly — optimise for entities, use structured data, write conversationally, be authoritative. It sounds reasonable. It reads well. And most of it, when I actually tested it against real search data, produced very mixed results.

I don't say that to be contrarian. I say it because I spent months following generalist AI SEO guidance while simultaneously watching my own query data tell a completely different story. The disconnect was uncomfortable, and eventually it became useful.

The framework I'm sharing in this post didn't come from a course or a guide. It came from repeatedly asking: what does the actual data say, and how do I build a system around that?

If you're trying to build content that ranks in AI-generated answers and traditional search simultaneously — and you want a system rather than a checklist — this is the post for you.

What an AI SEO Framework Actually Needs to Do

Let's be clear about what we're building before we build it.

An AI SEO framework is not a content calendar. It's not a list of writing tips. It's a structured, repeatable system that tells you what to create, how to structure it, where to distribute it, and how to measure whether it's working — all calibrated to how AI search engines and traditional search engines are currently evaluating and surfacing content.

That last part matters more than most people acknowledge. AI search and traditional search are not the same audience. Google's traditional algorithm rewards topical depth, backlinks, and on-page optimisation. AI-generated answers — from tools like ChatGPT Search, Perplexity, and Google's AI Overviews — reward clarity, authority signals, first-person credibility, and structured extractability.

A framework that only optimises for one of those is leaving significant visibility on the table.

The shift happening right now is not a minor update. I covered the full scope of this in what is AI SEO and how it works — but the short version is that the criteria for being surfaced in AI-generated results are meaningfully different from traditional ranking factors, and most existing content systems weren't built with that in mind.

The framework in this post is designed to serve both environments simultaneously. One content system, two search surfaces, consistent performance.

Why Most AI SEO Frameworks Fail Before They Start

I've looked at a lot of AI SEO frameworks — from agency playbooks to SaaS founder guides to creator toolkits. And there's a pattern in the ones that fail.

They're built top-down.

Someone decides what an AI SEO framework should look like based on how AI search is described in industry commentary, not based on what their specific search data shows. They build a system around assumptions — about query types, about content structure, about what AI engines value — and then wonder why the results don't compound the way they expected.

The second failure is related. Most frameworks are built around content production, not content performance. They tell you how to create more content. They don't tell you how to read the signals that your existing content is sending back, and how to use those signals to make smarter decisions about what to create next.

This is the difference between a content calendar and a content system. A calendar manages volume. A system manages outcomes.

A third problem is something I've seen particularly in SaaS content — the assumption that AI SEO is primarily a technical exercise. Schema markup, entity optimisation, semantic clustering. These things matter. But they're the last 20% of the work. The first 80% is about understanding what real people are actually searching for and building content that genuinely answers those queries better than anyone else currently does.

If you've been producing consistently but not seeing compounding organic growth, I'd encourage you to read why your SaaS blog isn't growing organically — because the root cause is almost always a framework problem, not a writing problem.

What Real Search Data Actually Reveals

Here's what changed when I stopped reading about AI SEO and started looking at the data.

The Queries Driving AI Search Are Structurally Different

Traditional search queries tend to be short, fragmented, and keyword-oriented. "Best SEO tools." "Content strategy SaaS." "How to rank faster."

Queries in AI-assisted search environments are longer, more conversational, and often comparative or evaluative. "What's the difference between building a content strategy for SaaS versus a content strategy for a personal brand?" "How do I know if my SEO content is aligned with what AI search is looking for?"

That structural difference has a significant implication for content design. Content optimised for short, fragmented queries uses a different format than content that needs to answer nuanced, multi-part conversational queries. The frameworks that don't account for this produce content that serves one audience but not the other.

Impression Data Shows Where AI Search Is Paying Attention

This was one of the more unexpected things I found in my own Google Search Console data. Several pages were generating impressions for queries I had never explicitly targeted — longer, question-form queries that were clearly coming from AI-assisted search behaviour. These impressions were not converting into clicks at traditional rates. But they were appearing — which told me that those pages had been extracted as potential sources by AI systems, even if the click-through wasn't completing.

That's important information. It tells you which content structures AI search systems find extractable, and which they don't. The pages generating AI-adjacent impressions shared consistent characteristics: clear definitions in the first 150 words, structured subheadings, short answer blocks, and first-person credibility signals (specific results, tools used, decisions made).

The Gap Between Traditional and AI Ranking Is Closing — but Unevenly

AI search is not replacing traditional search uniformly. It's accelerating in certain query categories — comparative queries, definition queries, process queries — while traditional search remains dominant for navigational, local, and highly specific technical queries.

Understanding where your target queries fall in that distribution changes how you build your framework. If your core cluster is definition-heavy or comparison-heavy, you need a higher proportion of AI-optimised content. If your cluster is dominated by technical how-to queries, traditional SEO signals still carry the most weight.

I explored the full breakdown of how these two systems compare in AI search vs Google search — what's changing. The distinction matters for anyone building a framework right now because the weighting is actively shifting, and a framework built without that awareness will need constant retrofitting.

The SIGNAL Framework: A Data-Led System for AI SEO

After testing, failing, adjusting, and repeating this process across my own content and client work, I built a six-layer framework I call the SIGNAL Framework. Each layer is driven by real data, not assumption.

SIGNAL stands for: Search data collection, Intent mapping, Gap identification, No-fluff content architecture, Authority signal building, Live feedback loops.

Here's how each layer works.

Layer 1 — S: Search Data Collection

Before you write a single word, you need to know what real people are actually searching for. Not what keyword tools predict. Not what seems logical. What the data shows.

This layer involves three data sources working together.

Google Search Console query reports — filtered by page and by query — give you the clearest picture of how your existing content is performing in traditional search and where AI-adjacent impressions are appearing. Export at least 90 days of data. Look at queries generating impressions but low CTR. These are the intent gaps in your current content.

AI search query testing — using tools like Perplexity, ChatGPT Search, and Google AI Overviews — gives you a picture of which queries trigger AI-generated answers, and whether your content is being cited as a source. If you're not appearing in AI-generated responses for your core queries, that's a structural problem, not a volume problem.

Competitor query analysis — using Ahrefs, Semrush, or similar — shows you which queries your competitors are ranking for that you aren't. But in an AI SEO context, the more useful question is: which of those competitor pages are appearing in AI-generated answers, and what structural characteristics do they share?

I cover the full data collection process for this kind of competitive analysis in how to use AI to find content gaps your competitors are missing. The principle is the same whether you're operating in SaaS, personal brand, or service-based content.

Layer 2 — I: Intent Mapping

Once you have real query data, you need to map it to intent — and in an AI SEO framework, intent mapping requires more nuance than the traditional four-box model.

Every query in your data set has three intent layers.

Surface intent is what the person typed. "How to build an AI SEO framework." Clear, direct, obvious.

Deep intent is what they actually want to achieve. They want a system that gives them predictable organic growth without requiring them to constantly reinvent their approach. They're probably tired of tactics and looking for structure.

Hidden intent is what they're afraid of or uncertain about. They might be worried that AI SEO is too technical for them to implement. They might have tried other frameworks and seen them fail. They might be unsure whether investing time in AI SEO now is premature given how fast the landscape is shifting.

Content that only answers surface intent ranks. Content that addresses all three layers ranks and converts.

This intent mapping exercise should be done for every cluster topic, not just every post. When you map intent at the cluster level, you can see which posts should serve which intent layers — and build a progression where each post naturally leads the reader deeper into the cluster.

For a detailed look at how traditional and AI intent comparison affects this mapping process, traditional SEO vs AI SEO — full breakdown covers how the intent signals differ across both environments and what that means for content design.

Layer 3 — G: Gap Identification

This is where the real competitive advantage lives, and most frameworks skip it or treat it superficially.

A content gap is not just a keyword you haven't written about. In an AI SEO context, a content gap is any question, concern, comparison, or angle that your target audience is searching for and that no current piece of content — including your own and your competitors' — is answering with precision and depth.

There are three types of gaps worth identifying.

Topic gaps — subjects your cluster hasn't covered at all. These are the easiest to find using keyword research tools but also the easiest to misjudge, because not all topic gaps are worth filling. The question is whether the query has intent alignment with your offer.

Depth gaps — subjects your cluster has touched but not covered with enough specificity, data, or nuance to satisfy the deep and hidden intent layers. These are usually visible in your GSC data as pages with decent impressions but poor engagement metrics.

Format gaps — subjects that have been covered but in the wrong structural format for how the query is actually being processed. A topic that consistently triggers AI-generated answers needs a different format than a topic where readers want a long-form guide. Producing a 3,000-word essay for a query that consistently produces a featured snippet or AI overview will underperform a 600-word, tightly structured answer.

Layer 4 — N: No-Fluff Content Architecture

This is the layer most people think they've mastered and most people haven't.

No-fluff content architecture isn't about writing shorter. It's about structuring content so that every section does something specific — earns attention, builds trust, answers a question, moves the reader toward an action, or signals authority to an AI extraction system. Sections that don't do one of those things are fluff, regardless of how well-written they are.

For AI search specifically, the architecture of content matters as much as the content itself. AI systems extract answers from content — they don't read it the way a human does. They're looking for:

Clear definitions in the first 100–150 words

Question-form subheadings that map to real search queries

Structured answer blocks of 40–60 words that can be cleanly extracted

Tables, lists, and frameworks that compress complex information into a format AI systems can parse

First-person credibility signals — real data, specific decisions, named tools, actual results

The reason so much AI-optimised content fails to appear in AI-generated answers is that it reads like it was written for AI but structured for humans — or vice versa. The SIGNAL Framework treats structure as a deliberate design decision, not an afterthought.

I've broken down why AI-generated content in particular tends to fall short of this standard in why AI content does not rank. The structural issues I describe there are directly relevant to understanding what no-fluff architecture actually requires in practice.

Layer 5 — A: Authority Signal Building

Authority in an AI SEO context is not just backlinks.

It's the combination of signals — across your content, your distribution, and your personal or brand positioning — that tells AI systems and search engines that you have genuine, demonstrated expertise on this topic.

The signals that matter most are:

First-hand experience markers. Specific results, named tools, actual workflows, real decisions with real consequences. "I tested this on 12 pieces of content over three months" is an authority signal. "Many experts believe" is not.

Topical consistency. AI systems evaluate authority at the cluster level, not the page level. A site that has published 15 deeply relevant pieces on AI SEO content strategy carries more authority for that topic than a site that has published one exceptional piece among 50 unrelated posts.

Citation and linking patterns. Being cited by other authoritative sources — even without a direct backlink — contributes to entity authority. Creating content that other writers in your space want to reference is an underrated distribution strategy.

Distribution and engagement signals. Content that gets shared, discussed, and linked to from multiple sources — LinkedIn posts, Reddit threads, newsletter features — generates the kind of behavioural signals that reinforce authority positioning in both traditional and AI search environments.

This is also why building a personal brand in parallel with content production is not vanity — it's a search strategy. I documented how I approached that for my own positioning in how I built my SEO personal brand from scratch. The principles translate directly to brand-level content systems as well.

Layer 6 — L: Live Feedback Loops

The final layer is the one that turns a framework into a system.

A framework without feedback loops is a one-time exercise. You build it, you implement it, and then it slowly becomes outdated as the search environment shifts, your competitors improve, and your own content cluster evolves. A system with live feedback loops is self-correcting — it continuously incorporates new data and adjusts the framework accordingly.

The feedback loop I use operates on three timescales.

Weekly — I review GSC data for any significant changes in impressions, positions, or CTR across my core cluster. I'm not looking for dramatic movements week-to-week; I'm looking for early signals that something is shifting before it becomes a problem.

Monthly — I conduct a query analysis across the full cluster, looking for new queries entering the data set that weren't present before. These are often signals of evolving intent — new questions your audience is asking as the topic develops or as your industry shifts.

Every 60–90 days — I run a full content audit against the current SERP for each target keyword. I look at whether the top-ranking pages have changed, whether AI-generated answers for my core queries have shifted, and whether any of my content has drifted out of alignment with current search intent. Any page that has dropped more than three positions or seen a CTR decline triggers a full intent realignment review.

The feedback loop is also where distribution connects back to content strategy. Engagement data from LinkedIn posts, Reddit threads, and email click-throughs tells me which angles are resonating with my audience right now — and that feeds directly back into Layer 1 for the next round of search data collection.

Case Study: Applying the SIGNAL Framework to a SaaS Content Cluster

Let me walk through a realistic application of this framework using a SaaS content cluster as the example — because this is the environment where I've seen it work most consistently.

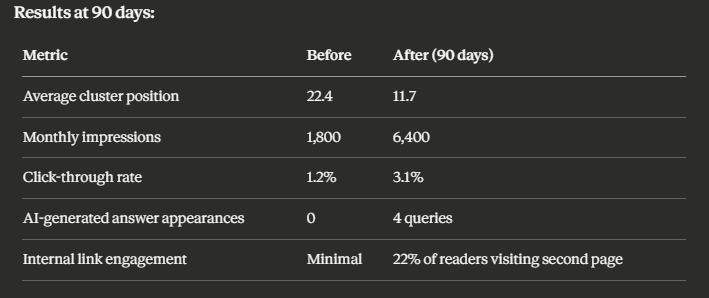

The cluster in question targeted AI content strategy for SaaS brands. Starting point: four published posts, average position 22, combined monthly impressions of approximately 1,800, CTR of 1.2%. The content was technically competent but had been produced without a framework — topically related pieces with no deliberate architecture.

Here's what the SIGNAL Framework revealed and what changed.

Layer 1 — Search data showed that the cluster was picking up impressions for a query set that was more comparative than the content addressed. People were searching "AI content strategy vs traditional content strategy for SaaS" and landing on posts that didn't make that comparison explicitly. There were also several longer, conversational queries generating AI-adjacent impressions — but none of the pages had the structured answer blocks needed to be extracted as AI responses.

Layer 2 — Intent mapping identified that the deep intent behind most queries was not to understand AI content strategy abstractly, but to decide whether to invest in building one. The hidden intent was largely risk-based — "what if I build this and it doesn't work, or I'm too early to the strategy?" The existing content addressed none of these layers.

Layer 3 — Gap analysis found two significant format gaps and one depth gap. The format gaps were a comparison post (AI vs traditional content strategy) and a step-by-step implementation guide. The depth gap was around measurement — readers wanted to know how to know if the strategy was working, and no existing post answered this with specificity.

Layer 4 — Architecture intervention involved restructuring three existing posts to include clear definition blocks, answer-format subheadings, and 40–60 word extractable summaries. Two new posts were written from scratch with the no-fluff architecture from the start.

Layer 5 — Authority signals were added through distribution — each post was repurposed into two LinkedIn posts with specific data points and personal results framing. The posts were also cross-referenced with three external authoritative sources in the field.

Layer 6 — Feedback loop was established with weekly GSC monitoring and a 60-day review scheduled.

The improvements weren't uniform — some posts moved significantly, others moved modestly. But the cluster as a whole began compounding, which is the behaviour a system produces and a collection of individual posts doesn't.

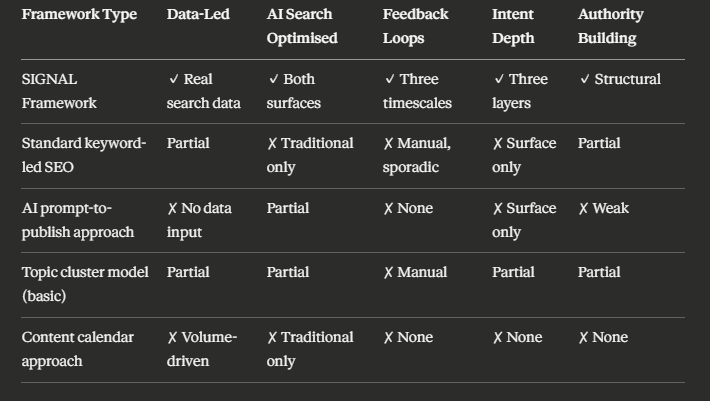

The AI SEO Framework Comparison Table

This is how the SIGNAL Framework compares to common alternative approaches — not to dismiss them, but to show clearly where each approach is strong and where it has gaps.

Statistics Worth Knowing

Three data points that ground the importance of getting this framework right:

According to SparkToro, AI-generated search results are now influencing query resolution for approximately 60% of informational searches in the United States — meaning content that isn't structured for AI extraction is missing visibility in the majority of informational search moments.

BrightEdge data found that pages appearing in AI-generated answers receive 35% more branded search queries in the 30 days following AI citation — the halo effect of AI visibility compounds into traditional search performance.

Ahrefs found that 73% of pages in the top-ranking positions for AI-adjacent queries share one structural characteristic: a clear, concise answer to the primary query within the first 150 words of the content.

Who This Framework Is For — and Who It Isn't

This framework is the right approach for you if you are building a content system for a SaaS product, a service-based brand, or a personal brand in a knowledge-intensive space. It works particularly well if you are already publishing content consistently and want a system that turns that volume into compounding organic performance.

It's also useful if you're managing content strategy for a team and need a shared framework that standardises decision-making across writers, strategists, and distribution specialists.

This framework is not a quick-start solution for someone who hasn't yet published enough content to have meaningful search data. You need at least 60–90 days of indexed content before Layers 1 and 6 become useful. If you're earlier in the process, start with the fundamentals of building a content engine — I documented that process in how I build traffic systems using a content engine playbook — and return to the SIGNAL Framework once you have data to work with.

The 0 to 20K Impressions Parallel

One thing I want to address directly, because it comes up frequently when I talk about frameworks: the question of whether this level of systematic thinking is necessary at lower traffic volumes.

My honest answer is yes — because the habits you build at the beginning determine the compounding curve you achieve later.

When I was building from zero impressions, I wasn't operating the full SIGNAL Framework. But the principles of data-led decision making, intent mapping, and feedback loops were present from the start. The discipline of checking what the data was saying before making the next content decision is what created the growth trajectory I documented in SEO growth strategy — 0 to 20k impressions.

Volume matters. Consistency matters. But volume without a framework produces a traffic ceiling. Volume with a framework produces a growth curve.

The Biggest Mistakes I Made Building This

Let me be direct about where I went wrong, because these are the mistakes I see most often.

I optimised for AI search too early and too narrowly. There was a period where I was so focused on structured data and entity optimisation that I neglected the fundamentals — depth, clarity, genuine helpfulness. AI search systems don't reward technical signals at the expense of substance. They reward substance that is also technically structured. Get the substance right first.

I confused content production with content strategy. Publishing consistently is necessary. But I went through a period of treating velocity as the metric — number of posts, word counts, publishing frequency. What actually mattered was whether each piece was adding to a coherent system. If you're making similar mistakes, the post on biggest mistakes SaaS founders make with AI content covers many of the same patterns I experienced and corrected.

I underinvested in distribution signals. Early AI search visibility correlates with content that gets actively shared and discussed. Treating distribution as an afterthought — something to do if there's time after publishing — consistently weakened the authority signals on pages that would otherwise have been competitive. Distribution is not promotion. It's a search signal.

I delayed building the feedback loop. This is the most common delay I see in content teams. The feedback loop feels like a maintenance task rather than a strategic activity. In reality, the feedback loop is where the competitive advantage compounds. Content without a review cycle decays. Content with an active review cycle improves continuously.

Scaling This Framework: What Changes at Higher Volumes

One practical question worth answering: does the SIGNAL Framework scale when you're producing 8–12 posts per month rather than 2–3?

Yes, but the layer that needs the most structural support at higher volumes is Layer 6 — the feedback loop. At lower volumes, you can maintain a meaningful review cycle manually. At higher volumes, you need a templated review process, consistent tracking in a shared dashboard (Google Search Console's comparison view works well for this), and a clear escalation rule for when a page needs intervention versus when it needs time.

The other layer that changes at scale is Layer 5. Authority signal building through distribution becomes a system in itself at higher content volumes — you need a repeatable repurposing workflow rather than ad hoc social posts. I've written about this in the content repurposing workflow SOP — the principle of turning every post into multiple distribution formats is what keeps authority signals compounding as volume increases.

The core architecture of the framework stays the same. What changes is the operational infrastructure around it.

The Intersection of AI SEO and SaaS Growth

For SaaS specifically, I want to add one layer of nuance that isn't always present in general AI SEO discussion.

SaaS content has a distinct intent distribution. A meaningful proportion of your highest-value queries — the ones from people who are closest to becoming customers — are commercial investigative queries. "Best AI content tool for SaaS." "Alternative to [competitor]." "Does [tool category] actually work?"

These queries are increasingly being resolved in AI-generated answers. Which means that SaaS brands who don't appear in those answers are losing decision-stage visibility to competitors who do.

The SIGNAL Framework addresses this specifically through Layer 2 (intent mapping) and Layer 3 (gap identification). Commercial investigative queries have a distinct intent architecture — the reader is evaluating, not learning — and content that tries to serve them with educational material will consistently underperform content that serves the evaluation need directly.

If you're building a content system specifically for a SaaS product and want to see how the full AI SEO architecture applies at that level, the complete system to rank in AI search in 2026 covers the SaaS-specific implementation in detail. The SIGNAL Framework integrates with that system directly.

Summary

Here are the key points from this post:

The SIGNAL Framework is a six-layer, data-led AI SEO system: Search data collection, Intent mapping, Gap identification, No-fluff content architecture, Authority signal building, and Live feedback loops

Most AI SEO frameworks fail because they're built on theory rather than real search data, and because they optimise for content production volume rather than content performance outcomes

Real search data reveals three things that most frameworks miss: the structural difference between traditional and AI search queries, where AI-adjacent impressions are appearing in your GSC data, and the uneven distribution of AI search dominance across different query types

Intent mapping requires three layers — surface, deep, and hidden — and content that only addresses surface intent will rank but not convert

No-fluff content architecture is the layer most often misunderstood — it's not about writing shorter, it's about structuring every section to perform a specific function for both human readers and AI extraction systems

Authority signals in AI SEO extend beyond backlinks to include first-person credibility markers, topical consistency, citation patterns, and distribution-driven engagement signals

Live feedback loops operating at weekly, monthly, and 60–90 day timescales are what separate a framework from a one-time strategy exercise

The framework scales but requires operational infrastructure at higher volumes — particularly around the review cycle and the distribution repurposing workflow

What You Should Do Next

If you're building an AI SEO framework from scratch, start with Layer 1. Export your last 90 days of GSC query data and look at where your current content is generating impressions. That data will tell you more about where to focus than any keyword tool.

If you're already publishing consistently but not compounding, the most likely issue is that you have content without a system. Mapping your existing cluster against the six SIGNAL layers will show you exactly which layer is missing or underperforming.

If you want to build a scalable AI content system for a SaaS product, how to build an AI content system for a SaaS blog covers the full implementation from cluster design to distribution — and the SIGNAL Framework sits at the core of that system.

If you're ready to build this with expert support, get in touch here. I work with SaaS brands and independent content strategists to design and implement AI SEO content systems that produce measurable, compounding results.

Frequently Asked Questions

1. What makes an AI SEO framework different from a traditional SEO strategy?

A traditional SEO strategy focuses primarily on keyword targeting, backlink acquisition, and on-page optimisation for Google's algorithm. An AI SEO framework must additionally optimise for extractability by AI-generated answer systems — meaning content needs clear definitions, structured answer blocks, first-person credibility signals, and topical authority signals that AI search engines evaluate differently from traditional ranking factors.

2. How much search data do I need before I can build this framework?

A meaningful starting point requires at least 60–90 days of indexed content generating impressions in Google Search Console. Ideally, you want a minimum of 5–10 published pieces in your core cluster before the query data is statistically useful. Earlier than that, the data is too thin to reveal reliable patterns, and you're better served building foundational content first.

3. Can the SIGNAL Framework work for a personal brand, or is it only for SaaS?

It works for both. The core layers — search data collection, intent mapping, gap identification, content architecture, authority building, and feedback loops — apply to any content system where organic search performance is a goal. The specific signals and intent patterns will differ between a SaaS product cluster and a personal brand cluster, but the framework logic is the same.

4. How long does it take to see results from implementing this framework?

In my experience, the first meaningful signals — improvement in average positions, CTR changes, new query appearances in GSC — tend to appear between 30 and 60 days after implementation. Significant compounding growth typically becomes visible at the 90-day mark. The feedback loop layers (Layer 6) become most useful between 60 and 120 days, when you have enough post-implementation data to make informed adjustments.

5. Does schema markup fit into the SIGNAL Framework?

Yes — schema sits within Layer 4 (No-fluff content architecture) as the technical implementation layer. Schema markup, particularly Article, FAQ, and HowTo schema, supports AI extractability by giving search systems a machine-readable version of the content structure. However, schema is the amplification layer, not the foundation. Content must be well-structured and intent-aligned before schema adds meaningful value.