AI SEO for Landing Pages: How to Structure Pages That Rank and Convert

AI SEO (AI-assisted search engine optimisation) improves landing-page performance primarily by compressing the research → creation → optimisation → testing loop, while expanding the amount of evidence a team can consider (SERP patterns, intent cues, competitor coverage, technical signals, and conversion behaviour). When used well, it tends to deliver faster iteration and more consistent on‑page execution; when used poorly, it can amplify low-value “scaled content,” factual errors, and brand-damaging inconsistencies.

Several recent developments make a rigorous, measurement-led approach essential. First, modern search increasingly incorporates generative features (e.g., AI summaries/overviews, AI-organised result experiences), which can depress click-through rates (CTR) relative to classic “blue link” behaviour for some query classes—raising the ROI of conversion rate optimisation (CRO) and the importance of being a cited/selected source. Second, Google’s policies explicitly warn that using automation (including generative AI) to produce many pages without adding user value may violate spam policies (notably “scaled content abuse”), so AI output must be governed, reviewed, and differentiated. Third, performance and user experience remain tightly connected to business outcomes; for example, a web.dev case study reports that QuintoAndar reduced INP by 80% and observed a 36% year‑over‑year increase in conversions in the same period, illustrating why Core Web Vitals and landing-page speed belong inside the SEO workflow rather than beside it.

The most robust operating model is “hybrid”: use AI systems to draft, cluster, score, and triage—then use human editors and subject-matter experts to validate facts, ensure E‑E‑A‑T signals (experience, expertise, authoritativeness, trust), align with brand/legal constraints, and prioritise experiments.

Definitions and Mechanisms

AI SEO for landing pages can be defined as: the use of machine learning (ML), natural language processing (NLP), and automation to plan, produce, optimise, and test landing-page content and technical implementation in order to improve visibility across search surfaces (classic SERPs and generative/search-assistant surfaces) and improve conversion outcomes from that traffic. This sits on top of the fundamentals of crawling, indexing, ranking, and search appearance.

How AI SEO “works” in practice

NLP and semantic understanding (topic/entity modelling).

Modern search engines use AI systems to interpret language and intent, not just exact-match keywords. In Google’s own ranking-systems documentation, examples include BERT (understanding how word combinations express meaning and intent), neural matching (matching concepts in queries and pages), and RankBrain (relating words to concepts to return relevant content even without exact wording).

AI SEO tools mirror this by clustering keywords into topics, extracting entities and attributes, and mapping copy to the language patterns that appear in top-ranking results. The goal is to create a landing page whose conceptual coverage matches the query’s intent while remaining concise and conversion-focused.

Intent detection and SERP pattern inference.

Intent detection typically blends: (a) query modifiers (e.g., “buy,” “price,” “near me”), (b) SERP composition (ads density, shopping modules, local packs, featured snippets), and (c) competitor page archetypes. This aligns with the idea that search systems use many factors to rank and present results, and that the visible SERP elements reflect what the engine believes the user is trying to accomplish.

In 2026, AI SEO often extends beyond “informational vs transactional” into “task completion” design: pages must answer quickly and drive the next step.

Semantic search and “query fan‑out” effects.

Some AI SEO platforms explicitly describe “query fan‑out” awareness—i.e., how one topic expands into many related questions/prompts—so a landing page can capture a wider surface of demand (supporting sections, FAQs, comparisons, objections).

This logic is consistent with research on generative engines and optimisation frameworks that measure “visibility” across generated answers.

SERP feature optimisation (rich results, snippets, titles).

AI can assist with eligibility and optimisation for SERP features by generating structured data, FAQs, and concise answer blocks—but eligibility is governed by search engine rules. Google’s structured data documentation emphasises following general guidelines and content policies to be eligible for rich results, and recommends JSON‑LD as an implementation format.

Title links and snippets are also partially under engine control: Google may use on-page and off-page signals to generate title links, especially if crawling is restricted. Meta descriptions can inform snippets, but Google may choose alternative text if it better describes the page for a query. AI SEO uses these rules to produce “best-effort” titles/descriptions and to structure content so the engine can reliably extract the intended summary.

Content scoring and gap analysis (on-page relevance heuristics).

Many AI SEO tools compute a content score or provide data‑driven optimisation recommendations by comparing a draft against top-ranking documents and extracted terms/entities. For example, Surfer markets a “Content Score” and positions it as a data-backed on-page optimisation metric. These scores are not search engine ranking factors; they are internal heuristics meant to reduce guesswork and standardise editorial QA.

On-page and technical SEO automation.

AI SEO automation increasingly covers:

Meta and snippet controls (e.g., drafting titles/descriptions; using snippet-control directives such as

nosnippet,max-snippet,data-nosnippetwhere appropriate).Crawling/indexing hygiene (robots, canonicals, internal linking).

Auditing at scale (broken links, redirects, duplicate pages, structured data validation).

This matters because search engines themselves perform deduplication to avoid showing unhelpfully similar results.

Tools and platforms landscape

Pricing and plan details change frequently; figures below reflect what was publicly available in the cited sources (most updated pages observed in February 2026) and should be verified during procurement. Where a vendor does not publish pricing, it is labelled Unspecified.

| Category | Vendor / Platform | Core Landing-Page Uses | Strengths | Weaknesses / Constraints | Pricing | Sources |

|---|---|---|---|---|---|---|

| Keyword research + SERP intelligence | Semrush | Keyword discovery, competitor analysis, audits, briefs, rank tracking | All-in-one toolkit covering research, audits, tracking, content tools | Pricing page incomplete; limits vary by plan | Pro $139.95/mo; Guru $249.95/mo | 18 |

| Keyword/backlink intelligence | Ahrefs | Keyword research, backlinks, audits, content research | Strong backlink database + tiered plans | Credit-based limits increase costs | $29–$1,499/mo | 20 |

| Content optimisation + AI visibility | Surfer | Briefs, entities, linking, AI visibility | AI scoring + internal linking + plagiarism tools | Score is heuristic not ranking guarantee | $49–$999/mo | 14 |

| Content optimisation enterprise | Clearscope | Topic modelling, drafts, monitoring | Intent-driven recommendations + inventory monitoring | Costs rise with scale | $129–custom | 9 |

| Research + brief generation | Frase | SERP research, briefs, drafts, audits | All plans include research + optimisation + API | Monthly limits on articles/audits | $39–$239/mo | 22 |

| Content strategy | MarketMuse | Topic modelling, planning, scoring | AI strategy + inventory insights | Pricing not public | Custom | 23 |

| LLM automation | OpenAI | Copy drafts, schema, QA, automation | API + pricing transparency | Hallucination risk | $20/mo + usage API | 26 |

| Copy generation | Jasper | Brand voice, CTA variants, messaging | Marketing-focused workflows | Needs training for brand accuracy | $59/seat+ | 27 |

| AI SEO platform | Writesonic | Articles, automation, visibility tracking | Tracks AI search visibility | Risk of thin content | $49–$249/mo | 28 |

| WP technical SEO | Yoast | Meta tags, schema, on-page optimisation | Automated technical SEO | WordPress only | $118.80/year | 30 |

| WP SEO + schema | Rank Math | Meta control, schema, analytics | Powerful schema generator | Renewal costs vary | $7.99/mo+ | 31 |

| Enterprise schema | Schema App | Knowledge graph + structured data | Fully customisable implementation | Custom pricing only | Custom | 33 |

| AI schema SEO | WordLift | Structured data + internal linking | Entity-focused optimisation | High-tier pricing | €799–€999/mo | 34 |

| Technical crawler | Screaming Frog | Detect technical issues | Clear licensing + deep crawl data | Requires expertise | Free–£199/year | 35 |

| Technical audits | Sitebulb | Visual audits + prioritised fixes | 300+ issue detection | Pricing partly hidden | From £95/mo | 36 |

| Image optimisation | Cloudinary | Compression, tagging, alt text | AI auto-tagging | Alt text needs review | $99–$249/mo | 37 |

| Performance monitoring | SpeedCurve | RUM + synthetic testing | Tracks performance regressions | Engineering required for fixes | $90+/mo | 39 |

| Core Web Vitals | Calibre | CrUX + synthetic monitoring | Field data tracking | Data interpretation complexity | $75–$1,500/mo | 41 |

| Experimentation | Optimizely | A/B testing + personalisation | Enterprise experimentation suite | Pricing hidden | Custom | 43 |

| Testing + analytics | VWO | Behaviour analytics + testing | Trial + optimisation suite | Quote required | Custom | 45 |

| AI targeting tests | AB Tasty | A/B testing + AI targeting | Multi-armed bandit optimisation | Pricing not shown | Custom | 46 |

| B2B personalisation | Mutiny | Segment-based messaging | Dynamic CTAs + social proof | No public pricing | Custom | 47 |

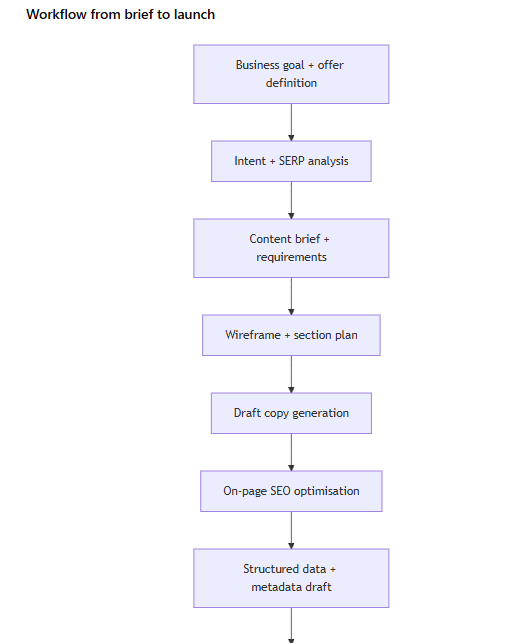

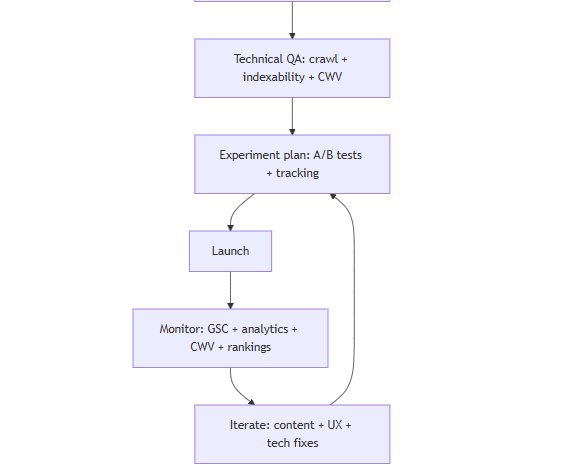

End-to-end workflow and implementation timeline

A rigorous AI SEO workflow treats landing-page creation as an experimentable system with gates: research → brief → draft → optimisation → technical QA → launch → measure → iterate. This aligns with Google’s emphasis on people-first content and on avoiding automation whose purpose is to manipulate rankings.

How AI fits at each step (and what must remain human-led):

Business goal + offer definition (human-led). AI can summarise market language, but value proposition, pricing, claims, and legal constraints must be owned by the business. (Risk context.)

Intent + SERP analysis (AI-assisted). Use AI SEO tools to cluster keywords by intent, extract SERP feature opportunities, and map competitor archetypes. This responds to how modern ranking systems interpret intent and concepts.

Brief + requirements (AI-assisted, human-approved). Generate a structured brief that includes: target query set, user intent, required proof points, differentiation, trust signals, and measurement plan. Ground the brief in search guidelines (titles/snippets, structured data policies).

Wireframe + section plan (hybrid). AI proposes sections (hero, benefit proof, comparison, FAQs, objection handling). Human designers ensure scannability, accessibility, and conversion flow. (Page experience emphasis.)

Draft copy generation (AI-first draft, human final). AI creates variants quickly; humans validate claims and align tone and E‑E‑A‑T “who/how/why” disclosure expectations where appropriate.

On-page SEO optimisation (AI-assisted). Use content scoring/gap tools, but treat recommendations as hypotheses; don’t force keyword stuffing. Google explicitly discourages search-engine‑first content and warns against extensive automation for ranking manipulation.

Metadata + structured data (AI-drafted, validated). AI can draft schema in JSON‑LD; humans validate accuracy and eligibility. Structured data must follow guidelines and content policies to be eligible for rich results.

Technical QA (automation-led, engineer-approved). Crawl with a tool, confirm indexability, validate robots directives, and measure Core Web Vitals.

Testing + launch (measurement-led). Ensure attribution and key-event tracking are configured (GA4 key events; Search Console CTR/position).

KPIs and measurement framework

A landing page is “top-performing” only when rankings and CTR translate into conversions, and when page experience is strong enough to sustain both. Google’s Search Console documentation defines clicks, impressions, average CTR, and average position; these are the backbone SEO metrics for landing pages.

| Objective | Primary KPIs | Secondary / Diagnostic Metrics | Measurement Sources & Notes |

|---|---|---|---|

| Search visibility | Impressions, average position | Query/URL segmentation, device/country breakouts | Search Console Performance report and metric definitions. |

| Search demand capture | Clicks, CTR | Title rewrites, snippet usefulness | Meta descriptions may be used for snippets but not guaranteed; title links can be generated from page or external signals. |

| Conversion performance | Conversion rate, conversion volume, CPA | Funnel drop-off, form completion, scroll depth, CTA CTR | GA4 key events are standard for tracking important actions. |

| Engagement quality | Bounce rate / engaged sessions, time on page | Segment behaviour by device, source, intent | Use consistent definitions; avoid mixing tracking methodologies. |

| Page experience | Core Web Vitals (LCP, INP, CLS) | Errors, regressions, 75th percentile distribution | Search Console CWV report uses field data. |

| Technical SEO hygiene | Indexability, canonicals, redirects, schema validity | Crawl depth, internal linking | Crawler audits + structured data testing; robots meta controls indexing. |

Case studies and empirical evidence

Evidence in this space is uneven: vendor case studies often lack controls, while controlled SEO experiments and academic benchmarks provide stronger inference. The most decision-useful evidence tends to show variance—AI can help, but outcomes depend on domain competitiveness, market, and execution quality.

Controlled SEO experimentation evidence

A controlled SEO split test reported by SearchPilot tested adding AI-generated content to travel pages. The published write-up reports a 13% increase in organic sessions in the US market, while the Australia market was inconclusive (confidence interval spanning positive and negative outcomes). This supports two practical conclusions relevant to landing pages:

AI-assisted rewrites can improve organic performance in some contexts, and

the same approach may fail or be ambiguous elsewhere—so teams should treat AI SEO recommendations as testable hypotheses, not universal rules.

A later SearchPilot write-up adds that including a disclosure (“This content was generated by AI”) did not appear to “bear any weight” in how Google ranked or valued the content in that test context (no numeric uplift stated in the visible text, but the qualitative conclusion is explicit). This is consistent with Google’s broader guidance that “how” content is produced can be disclosed when users would expect it, and that the key question is whether the content is made primarily to help people rather than manipulate rankings.

Academic and benchmark-based evidence on “AI search” and GEO

A research line on Generative Engine Optimisation (GEO) formalises optimisation for generative engines and introduces benchmarks and “visibility” metrics. One paper reports that GEO strategies can boost visibility in generative engine responses by up to 40%, with variation across domains. A 2025 paper extends the theme, claiming controlled experiments show systematic biases in AI search sourcing and proposing an “engine-specific” strategy agenda.

For landing pages, the actionable implication is not “write for bots,” but rather: improve machine scannability and justification (clear claims, structured evidence, consistent terminology, and trustworthy citations/links), because generative systems synthesise across sources.

Evidence on changing click behaviour due to AI summaries

In 2025, Pew Research Center reported that when an AI summary appeared, users were less likely to click links to other websites (traditional result link clicks in 8% of visits with an AI summary vs 15% without).

This does not eliminate SEO value, but it does shift optimisation priorities toward:

higher SERP attractiveness (titles/snippets that match intent),

deeper conversion optimisation (traffic is scarcer/more valuable), and

being reliably “referenceable” (earned coverage + structured data + trust signals).

Web performance evidence tied to conversion outcomes

Google’s Core Web Vitals documentation frames CWV as real‑world UX metrics and recommends that site owners achieve good results for success in search and user experience. The Search Console CWV report is based on LCP, INP, and CLS measured via real user data.

A web.dev case study reports that QuintoAndar reduced INP by 80% and observed a 36% year‑over‑year increase in conversions, alongside large shifts in the share of pages meeting “good” thresholds.

For landing pages, this is a concrete reminder that technical SEO and “page experience” are not separate from revenue—AI SEO programmes that ignore CWV often plateau.

Risks, biases, and quality control

The dominant risk pattern in AI SEO is scale without value: the same automation that produces helpful drafts for one page can also generate hundreds of near-duplicate pages that trigger spam policies or simply fail to convert.

Policy and ranking risks

Google explicitly warns that using generative AI tools to generate many pages without adding value may violate spam policies on scaled content abuse. Google’s “people-first” guidance also recommends evaluating “who, how, and why” content is created, and notes that if automation is used primarily to attract search visits, that is not aligned with what systems seek to reward.

This means AI SEO programmes must implement “value gates”: unique insights, first-hand experience, original data, or strong product/service specificity, rather than generic summaries.

Hallucinations and factual reliability

Hallucinations—confident but false outputs—remain a known model failure mode. For landing pages, hallucinations are particularly dangerous because they can create:

false claims (legal exposure),

incorrect pricing/features, and

misleading comparisons.

A practical control is to require AI drafts to cite internal sources (pricing sheets, product docs) and to block publication until a human verifies all “hard claims” (numbers, guarantees, compliance statements). This matches Google’s emphasis on trust and accurate authorship/production context.

Duplicate content and thin differentiation

Search engines use deduplication systems to avoid unhelpful duplication in results, and Google also notes deduplication can affect featured snippets. If AI outputs are templated across many landing pages with minimal differentiation, you risk:

weak rankings due to sameness,

cannibalisation (pages competing for the same intent), and

lower conversion due to generic messaging.

Mitigations include intent-based page architecture, canonical rules, and deliberate differentiation (audience-specific proof, vertical-specific use cases).

E‑E‑A‑T and disclosure practices

Google’s documentation explains E‑E‑A‑T as a conceptual framework where trust is most important; it also notes that disclosures about automation can be useful when users would reasonably ask “How was this created?”.

For landing pages, E‑E‑A‑T tends to be strengthened by:

clear company identity and contact info,

evidence-backed claims (case studies, benchmarks, third-party certifications),

author/reviewer context where relevant, and

accurate structured data (organisation markup, product/service details).

Legal and ethical risks in the UK/EU context

If AI workflows process personal data (e.g., personalisation, intent cohorts, lead enrichment, session replay), data protection and transparency obligations apply. The UK Information Commissioner's Office publishes guidance on AI and data protection. In the EU, the European Parliament summarises that the EU AI Act entered into force in August 2024 with phased applicability; it notes key dates such as prohibited practices applying from February 2025 and general-purpose AI transparency timelines. The European Commission maintains an official AI Act policy page (updated January 2026), which should be treated as the primary reference point for current status.

For privacy-focused implementation details, France’s CNIL has published GDPR-oriented recommendations addressing AI system development and transparency.

Practical checklists and templates

This section provides operational templates designed for hybrid AI + human landing-page production, explicitly aligned to search guidance on snippets, structured data, page experience, and AI content use.

Step-by-step landing-page AI SEO checklist

Strategy and intent

Define the conversion goal and the single primary “next action” (request demo, buy, sign up).

Identify primary intent and secondary intents (comparison, objections, FAQs).

Decide which SERP features are plausible (rich results, sitelinks, FAQ-like sections where appropriate).

Brief and content

Produce a one-page brief with: target keyword cluster, audience, promise, proof points, exclusions (what you will NOT claim), compliance notes, and differentiation.

Require evidence for every quantitative claim (internal doc, public source, or tracked metric).

Add “E‑E‑A‑T fields”: who authored/reviewed, what experience is being demonstrated, and what makes the page trustworthy.

On-page SEO

Draft title link and H1 to align meaning; ensure on-page title is clear and prominent (to reduce mismatch and improve extraction).

Draft meta description as a truthful “pitch”; remember it may be used but not guaranteed.

Include scannable sections answering key questions; avoid fluff and filler.

Add internal links that are crawlable and descriptive.

Structured data

Draft JSON‑LD schema only for what is actually present and policy-compliant.

Validate via a rich results testing tool.

Technical QA

Confirm robots directives (no accidental

noindex).Crawl the page(s) to check titles, meta, redirects, canonicals, duplicates, structured data validity.

Measure CWV using field-data sources where possible; remember INP replaced FID in March 2024.

Launch and iteration

Create GA4 key events and confirm attribution.

Monitor Search Console impressions/clicks/CTR/position by query and page.

Run controlled experiments where feasible; expect market variance.

Landing-page brief template

Use this as a copy/paste structure (fill each “Unspecified” field explicitly if you do not know it yet):

Landing page name:

Offer / product:

Primary conversion action: (e.g., “Book a demo”)

Target audience: (industry, role, sophistication)

Primary intent: (transactional / evaluation / comparison / local / support)

Primary query cluster: (5–15 terms)

Secondary query clusters: (optional)

SERP observations: (ads density, AI summary presence, rich results, competitor archetypes)

Unique value proposition: (1 sentence)

Proof points: (case studies, certifications, metrics; include sources)

Objections to answer: (top 5)

Legal/compliance constraints: (claims you cannot make; required disclaimers)

E‑E‑A‑T signals to include: (author/reviewer, company details, experience evidence)

Structured data targets: (Organisation, Product/Service where applicable; avoid unsupported types)

Performance targets: (LCP/INP/CLS “good” thresholds; mobile-first assumptions)

Measurement plan: (Search Console metrics + GA4 key events)

A/B test candidates: (1–3 hypotheses)

Prompt templates for AI-assisted drafting

Prompt for intent-aligned outline (brief → structure)

“Create a landing-page section plan for:

Offer: {…}

Audience: {…}

Primary intent: {…}

Primary conversion: {…}

Constraints:No unsupported claims; label any uncertain statements as ‘Unspecified’.

Include sections that improve scannability and answer likely follow-up questions.

Propose 3 CTA variants.

Output: section-by-section outline with purpose, key points, and suggested evidence.”

Prompt for SEO-safe title/meta drafts (avoid manipulation)

“Draft 10 title link candidates and 10 meta description candidates for {page topic}.

Rules:

Must be truthful and match on-page content.

Avoid clickbait; prioritise clarity.

Provide a short rationale per draft linking it to user intent.

Reminder: meta descriptions may be used but are not guaranteed.”

Prompt for schema drafting (JSON‑LD)

“Generate JSON‑LD schema for this landing page using only information explicitly provided below.

Use JSON‑LD format.

Do not invent properties.

If a required value is missing, output ‘Unspecified’ and flag it.

Information: {paste structured facts}”

A/B test template for landing pages

Hypothesis: If we {change X}, then {metric Y} will improve because {reason grounded in intent/UX}.

Primary metric: (e.g., demo-request key event rate)

Secondary metrics / guardrails: bounce/engagement, lead quality proxy, CWV regressions.

Traffic allocation: 50/50 (or bandit, if your platform supports dynamic allocation).

Audience segmentation: device-first (mobile vs desktop), then channel (organic vs other).

Minimum runtime: set by expected conversion volume and seasonality stability (document assumptions).

Implementation notes: avoid cloaking; ensure both variants are crawlable and indexable unless intentionally restricting indexing.

Result write-up: include effect size, confidence/credible interval (depending on stats engine), and implementation decision (roll out / iterate / abandon). Controlled SEO test case studies illustrate why confidence intervals and market differences matter.